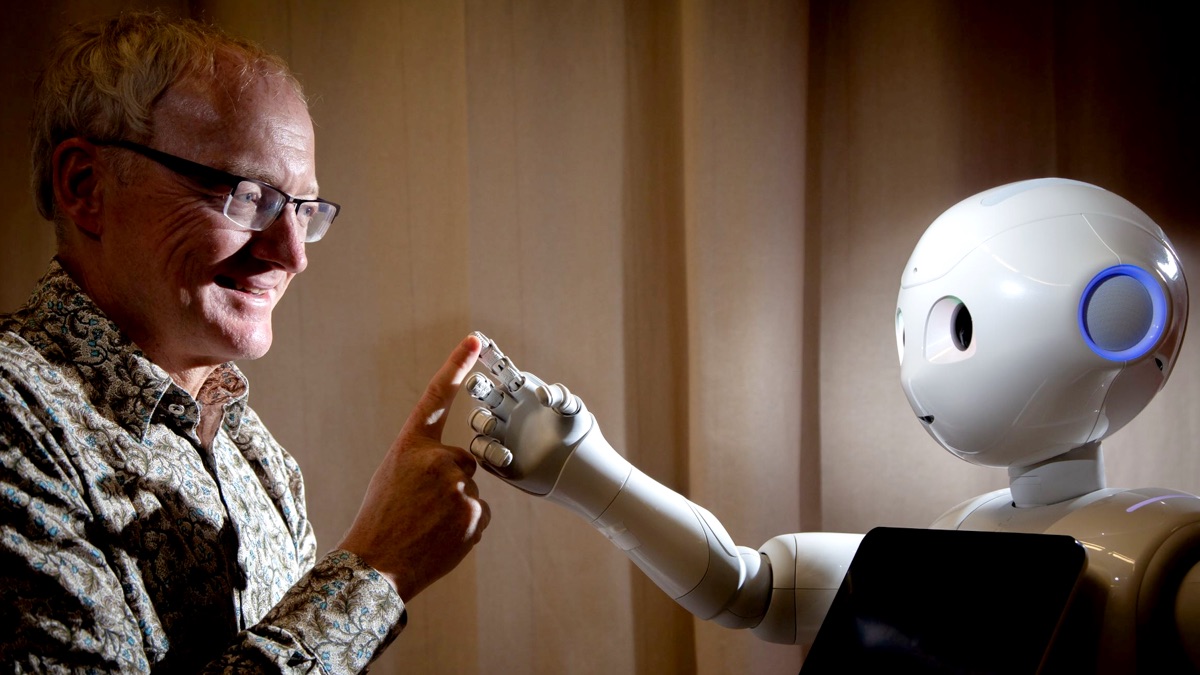

The first special-guest episode in the spring series is a fascinating chat about artificial intelligence, killer robots, and more with UNSW Scientia Professor Toby Walsh, chief scientist of the brand new UNSW AI Institute.

In this episode we talk about neural networks, the dangers of algorithmic policing, Google Duplex, cryptocurrency, HAL 9000 (obviously) and other disembodied computers, tribbles, whether red-eyed robots will take over the world, and even the Australian government’s illegal “robodebt” scheme.

This podcast is available on Amazon Music, Apple Podcasts, Castbox, Deezer, Google Podcasts, iHeartRadio, JioSaavn, Pocket Casts, Podcast Addict, Podchaser, Spotify, and Speaker.

You can also listen to the podcast below, or subscribe to the generic podcast feed.

Podcast: Play in new window | Download (Duration: 1:07:17 — 61.6MB)

Episode Links

If the links aren’t showing up, try here.

Thank you, Media Freedom Citizenry

The 9pm Edict is supported by the generosity of its listeners. You can throw a few coins into the tip jar or subscribe for special benefits. Please consider.

This episode it’s thanks once again to all the generous people who contributed to The 9pm Spring Series 2022 crowdfunding campaign.

CONVERSATION TOPIC: Gay Rainbow Anarchist and Richard Stephens.

THREE TRIGGER WORDS: Peter Sandilands, Peter Viertel, Phillip Merrick, Sheepie, and one person who chooses to remain anonymous.

ONE TRIGGER WORD: Andrew Groom, Bic Smith, Bic Smith (again), Bruce Hardie, Elana Mitchell, Errol Cavit, Frank Filippone, Gavin C, Joanna Forbes, John Lindsay, Jonathan Ferguson, Jonathan Ferguson (again), Joop de Wit, Karl Sinclair, Katrina Szetey, Mark Newton, Matthew Moyle-Croft, Michael Cowley, Miriam Mulcahy, Oliver Townshend, Paul Williams, Peter Blakeley, Peter McCrudden, Ric Hayman, Rohan Pearce, Syl Mobile, and four people who choose to remain anonymous.

PERSONALISED AUDIO MESSAGE: Mark Cohen and Rohan (not that one).

FOOT SOLDIERS FOR MEDIA FREEDOM who gave a SLIGHTLY LESS BASIC TIP: Andrew Kennedy, Benjamin Morgan, Bob Ogden, Garth Kidd, Jamie Morrison, Kimberley Heitman, Matt Arkell, Michael Strasser, Paul McGarry, Peter Blakeley, and two people who choose to remain anonymous.

MEDIA FREEDOM CITIZENS who contributed a BASIC TIP: Bren Carruthers, Elissa Harris, Opheli8, Raena Jackson Armitage, and Ron Lowry.

And another five people chose to have no reward, even though some of them were the most generous of all. Thank you all so much.

Series Credits

- The 9pm Edict theme by mansardian via The Freesound Project.

- Edict fanfare by neonaeon, via The Freesound Project.

- Elephant Stamp theme by Joshua Mehlman.